Generative AI has transformed how enterprises build intelligent systems—from conversational agents and copilots to multimodal content engines. However, behind every high-performing model lies a critical foundation: high-quality annotated data. For organizations training and optimizing large language models (LLMs), annotation is no longer a simple labeling exercise. It is a strategic process that directly impacts model accuracy, safety, and scalability.

At Annotera, we work closely with enterprises navigating the complexities of Generative AI training pipelines. From supervised fine-tuning to RLHF workflows, businesses face several persistent annotation challenges that can affect model outcomes. In this article, we explore the top challenges in data annotation for Generative AI models and how partnering with a reliable data annotation company can help overcome them.

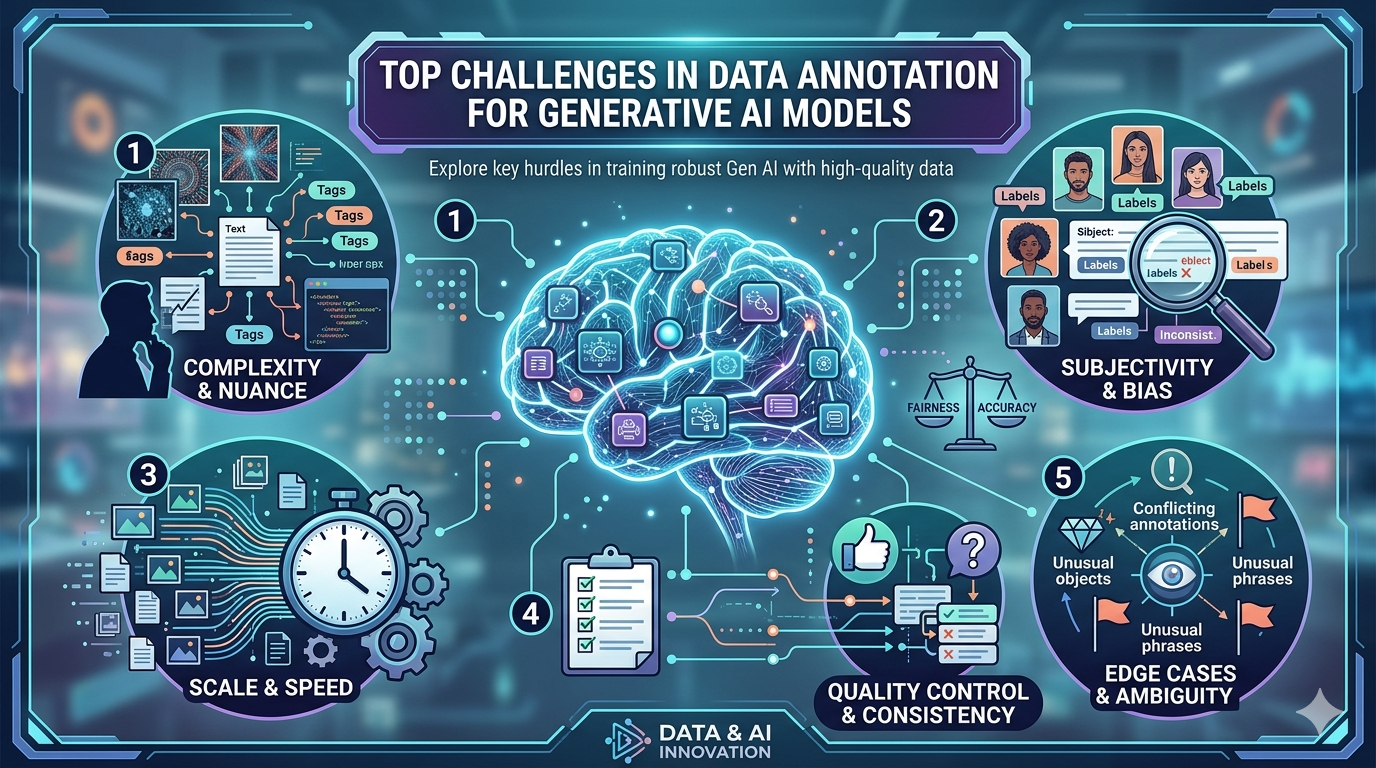

Generative AI systems are especially sensitive to annotation quality, where issues such as inconsistency, bias, scalability, and domain expertise become major bottlenecks.

1. Maintaining Annotation Consistency Across Large Datasets

One of the most significant challenges in Generative AI is maintaining consistency across millions of data points. Unlike traditional AI systems that may rely on straightforward labels, LLMs and generative models require nuanced annotations such as sentiment, intent, context, factual correctness, tone, and user preference.

For example, two annotators reviewing the same conversational response may interpret “helpfulness” differently. This inconsistency introduces noise into the dataset, which can negatively affect downstream model performance.

As datasets scale, maintaining standardized guidelines becomes increasingly difficult. Annotation teams often span multiple geographies, time zones, and expertise levels, which further increases variability.

A professional data annotation outsourcing partner addresses this challenge through:

- Detailed annotation playbooks

- Multi-level quality assurance workflows

- Regular calibration sessions

- Consensus-based review systems

Human inconsistency remains one of the most cited annotation challenges in labeled data workflows.

2. Handling Subjective Labels in RLHF Workflows

When building aligned LLMs, RLHF data annotation introduces an additional layer of complexity: subjectivity.

Reinforcement Learning from Human Feedback depends on human evaluators ranking or comparing model outputs based on dimensions such as:

- relevance

- truthfulness

- harmlessness

- tone

- completeness

These criteria are often subjective and context-dependent. What one evaluator considers “more helpful,” another may see as verbose or off-topic.

This makes preference data annotation especially challenging because the labels are not binary. Instead, they depend heavily on well-defined evaluation rubrics and continuous reviewer training.

Fine-grained RLHF and targeted human feedback frameworks show that subjective labels require more structured rubrics and selective expert review to improve alignment quality.

At Annotera, our RLHF data annotation services use domain-trained reviewers and standardized preference scoring frameworks to ensure more reliable feedback loops for LLM alignment.

3. Scaling Annotation for Massive Training Volumes

Generative AI models require enormous datasets for pre-training, fine-tuning, and continuous optimization. As enterprises expand use cases across customer support, finance, healthcare, and legal domains, annotation volume can grow exponentially.

The challenge is not just labeling more data—it is doing so without compromising quality.

Common scalability issues include:

- workforce management

- project turnaround delays

- reviewer fatigue

- rising operational costs

As model scale increases, annotation cost and time can become unmanageable without human-in-the-loop optimization and AI-assisted workflows.

This is where data annotation outsourcing becomes a strategic advantage. A specialized data annotation company like Annotera provides scalable teams, managed workflows, and AI-assisted pre-labeling systems that reduce time-to-delivery.

4. Domain-Specific Expertise Requirements

Generic annotators are rarely sufficient for enterprise-grade Generative AI applications.

For example:

- legal AI requires contract and compliance expertise

- healthcare AI needs clinical terminology understanding

- finance models require contextual knowledge of regulations and risk

Without subject matter expertise, annotations may be technically correct but contextually flawed.

This challenge is especially relevant for LLM Fine-Tuning Data Services, where domain accuracy directly affects response quality.

Enterprise SFT datasets increasingly require domain experts rather than general-purpose labeling teams.

Annotera solves this by deploying domain-specific annotation specialists who understand the business context behind every label.

5. Data Bias and Fairness Challenges

Bias in training data is one of the most critical concerns in Generative AI.

Poorly balanced datasets can lead to:

- demographic bias

- cultural bias

- language bias

- response toxicity

- unfair recommendations

Even small annotation biases can amplify during model training and surface as harmful outputs.

Bias remains a core challenge in data labeling and generative model training, especially when datasets lack representative coverage.

A mature data annotation company must include fairness audits, demographic balancing, and bias detection workflows as part of the annotation lifecycle.

6. Quality Control in AI-Assisted Annotation

AI-assisted annotation tools accelerate labeling, but they also introduce risk.

Auto-labeling systems can generate:

- incorrect entity recognition

- hallucinated tags

- false confidence scores

- inconsistent preference rankings

Research consistently shows that automated annotation must be validated against human-generated labels.

At Annotera, we implement human-in-the-loop validation pipelines to ensure that AI-assisted workflows improve speed without sacrificing data integrity.

7. Security and Confidentiality of Training Data

Many enterprises work with highly sensitive data, including customer conversations, proprietary documentation, and regulated datasets.

This makes security a major challenge in annotation projects.

Organizations need:

- NDA-compliant operations

- access-controlled environments

- encrypted data workflows

- role-based permissions

- secure audit trails

This is especially important in LLM Fine-Tuning Data Services, where proprietary business data is used to train domain-specific models.

A trusted data annotation outsourcing partner must prioritize compliance and enterprise-grade security standards.

Why Choosing the Right Data Annotation Partner Matters

The complexity of Generative AI demands more than basic labeling support. Enterprises need a strategic partner capable of managing quality, scale, domain expertise, and alignment workflows.

This is where Annotera delivers value.

Our specialized services include:

- enterprise-grade RLHF data annotation

- supervised fine-tuning datasets

- domain-specific labeling teams

- multimodal data annotation

- secure LLM Fine-Tuning Data Services

- scalable data annotation outsourcing

Whether you are building foundation models or enterprise copilots, the quality of your annotated data will define the performance of your AI systems.

Conclusion

The success of Generative AI models depends heavily on the quality of the annotated data used to train them. From subjectivity in RLHF to scalability, bias, and domain expertise, annotation challenges continue to evolve alongside model complexity.

By partnering with an experienced data annotation company like Annotera, enterprises can overcome these challenges with confidence, accelerate deployment timelines, and improve model reliability.

As Generative AI adoption continues to grow, robust annotation workflows will remain the backbone of successful AI innovation.