Here is something worth thinking about.

Two companies begin a data science project on the same day. They have similar budgets, equally skilled professionals, and access to high-quality data.

One company deploys a working model within four months and saves millions of dollars.

The other company is still debating spreadsheet columns six months later.

What makes the difference?

It is not luck.

It is not a secret algorithm.

It is not even the size of the team.

The difference is how they manage the data science life cycle.

According to research published in Harvard Business Review, organizations that use structured and repeatable approaches are significantly more likely to achieve measurable business outcomes from analytics initiatives. Many companies, however, still approach every data science project as if it were a brand-new experiment.

Top companies do not improvise.

They follow a disciplined, repeatable process.

And that process—centered on the data science life cycle—is what separates organizations that create real business value from those that simply collect data and hope for the best.

This article explores what leading companies do differently at every stage of the data science life cycle and what professionals can learn from their methods.

What Is the Data Science Life Cycle and Why Does It Matter?

The data science life cycle is the complete end-to-end process that turns a business question into a deployed and continuously improving data solution.

It starts with understanding the problem and continues through data collection, cleaning, exploratory analysis, modeling, evaluation, deployment, and monitoring.

It is called a life cycle because the process repeats. Models are retrained, assumptions are updated, and business goals evolve over time.

The core stages are:

- Business Problem Definition

- Data Collection

- Data Cleaning and Preparation

- Exploratory Data Analysis (EDA)

- Model Building

- Model Evaluation

- Deployment

- Monitoring and Improvement

Every successful data science project moves through these stages.

The difference is whether teams move through them deliberately or by accident.

Top companies do so deliberately.

Difference 1: They Define the Problem Before Touching the Data

Many teams jump straight into analyzing data because it feels productive.

Top companies do the opposite.

They first define the business problem with precision.

Before any data is collected, they establish a clear business question, specific success metrics, realistic timelines, and approval from both business and technical stakeholders.

For example, a manufacturing company once asked its team to "use data to improve operations." The team spent months building a production forecasting model, only to discover that management actually wanted a predictive maintenance system to identify machine failures in advance.

The lesson is simple: without a clearly defined problem, even technically sound work can miss the mark.

IBM has consistently highlighted poor problem definition as one of the most common causes of analytics project failure.

Difference 2: They Treat Data Quality as Non-Negotiable

Experienced data scientists know that most of a project's time is spent preparing data.

Real-world datasets contain missing values, duplicates, invalid entries, and inconsistent formats.

Top companies treat data quality as a formal discipline rather than a tedious cleanup task.

They build automated pipelines that standardize values, validate records, and flag issues before they reach the modeling stage.

Instead of manually fixing errors in spreadsheets, they create repeatable systems that ensure every dataset meets the same quality standards.

This approach reduces costly mistakes and leads to more reliable models.

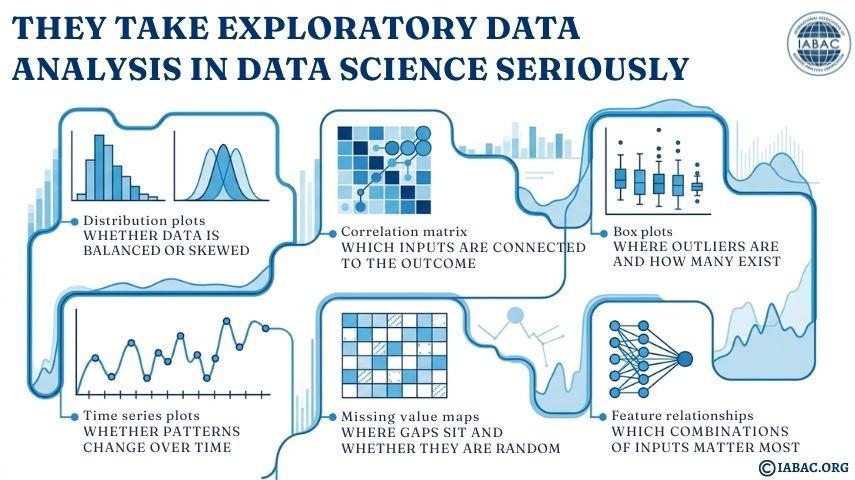

Difference 3: They Take Exploratory Data Analysis Seriously

Exploratory Data Analysis, or EDA, is where teams investigate the structure and patterns hidden in their data.

Leading companies use EDA to uncover relationships, identify outliers, and discover insights that may reshape the entire project.

They study distributions, correlations, trends over time, and unusual behaviors across customer or operational segments.

What top companies look at during EDA in their data science projects:

An e-commerce company, for instance, discovered during EDA that purchase rates consistently dipped on Tuesdays and Wednesdays. This insight led to a change in email campaign timing and increased conversions by 11 percent before any machine learning model was deployed.

EDA is often where the most valuable business discoveries are made.

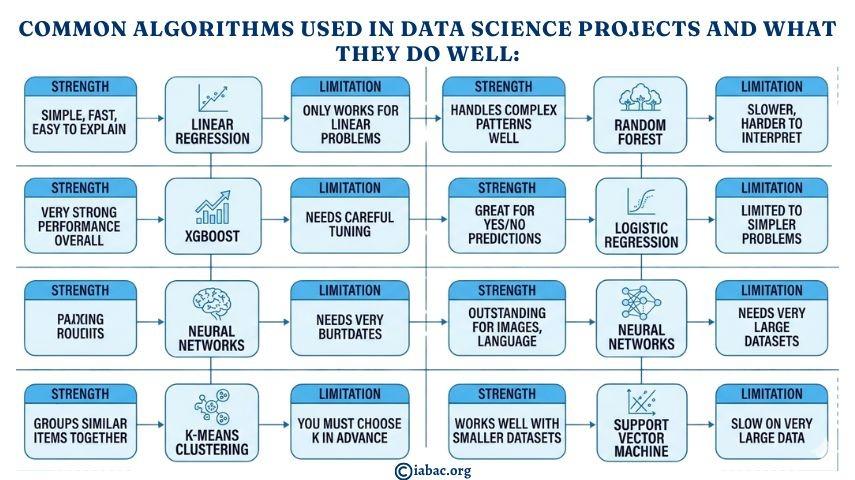

Difference 4: They Choose Models Based on Evidence, Not Habit

Some teams rely on the same algorithm for every project simply because they know it well.

Top companies compare multiple models and let performance data determine the winner.

They may evaluate Linear Regression, Random Forest, XGBoost, or Neural Network depending on the problem.

A key principle guiding this decision is the bias–variance tradeoff.

\text{Total Error} = \text{Bias}^2 + \text{Variance} + \text{Irreducible Noise}

Top organizations test several models and select the one that performs best on objective metrics rather than personal preference.

Common algorithms used in data science projects and what they do well:

Difference 5: They Use the Right Evaluation Metrics

A high-performing model on paper may still be useless if measured with the wrong metric.

Top companies define evaluation criteria before building the model.

Depending on the business problem, they may focus on accuracy, precision, recall, F1-score, AUC-ROC, RMSE, MAE, or MAPE.

For example, in medical diagnosis, recall is often more important than accuracy because missing a true case can have serious consequences.

By choosing metrics that reflect real-world business priorities, top companies ensure that models are both technically strong and operationally meaningful.

Difference 6: They Plan for Deployment From Day One

Many machine learning models never reach production because deployment is treated as an afterthought.

Leading companies design for deployment from the start.

They determine how predictions will be delivered, who will use them, what performance requirements must be met, and how errors will be handled.

Models may be deployed through REST APIs using FastAPI, batch processing systems, cloud endpoints, streaming pipelines, or business dashboards.

Because deployment is considered early, models are more likely to be adopted and used successfully.

Difference 7: They Monitor Models After Deployment

Deploying a model is not the end of the data science life cycle.

Over time, data and business conditions change, causing model performance to decline.

This is known as model drift.

Input distributions may shift, customer behavior may change, or entirely new patterns may emerge.

Top companies continuously monitor performance and set automated alerts when key metrics fall below acceptable thresholds.

When necessary, models are retrained using recent data so that predictions remain accurate and relevant.

Difference 8: They Build Teams That Communicate Across Functions

The biggest obstacle to successful data science projects is often not technical.

It is communication.

If business stakeholders do not understand or trust a model, they will not use it.

Top companies build teams that can explain technical findings in clear business language.

They conduct regular stakeholder reviews, create intuitive dashboards, and ensure that analysts and decision-makers remain aligned throughout the project.

Communication is treated as a core professional skill rather than an optional extra.

The Role of Data Science Certifications

Mastering the full data science life cycle requires more than coding knowledge.

It requires structured learning and practical experience across business understanding, data preparation, modeling, deployment, and monitoring.

International Association of Business Analytics Certifications offers globally recognized certifications designed around how data science is practiced in real organizations.

These programs help professionals demonstrate that they understand the complete journey from business problem to production-ready solution.

You can explore certification options at https://iabac.org/certifications.

A Real-World Example

A logistics company operating across twelve countries wanted to reduce shipment delays.

The team defined a clear objective: predict delays at least 48 hours in advance with a recall target of 75 percent.

They collected five years of shipping records along with weather, port congestion, and carrier performance data.

After cleaning and preparing more than 11 million records, exploratory analysis revealed that two ports had dramatically higher delay rates.

Several machine learning models were tested, and XGBoost delivered the best performance, exceeding the recall target.

The model was integrated into the company's operations dashboard and monitored weekly, with retraining performed monthly.

Within nine months, on-time delivery rates improved from 78.4 percent to 89.1 percent, customer complaints dropped by 43 percent, and annual savings reached $6.8 million.

This is what the data science life cycle looks like when executed with discipline.

Why Process Matters More Than the Model

Many people assume the machine learning model is the final product of a data science project.

Top companies understand that the real product is the process itself.

A strong data science life cycle can be repeated, refined, and scaled across dozens of business initiatives.

That repeatable process is what creates long-term competitive advantage.

For anyone building a career in data science, mastering the complete life cycle is far more valuable than focusing solely on algorithms or programming.

And for professionals who want global recognition of that expertise, certifications from respected organizations such as International Association of Business Analytics Certifications provide a meaningful credential.

The top companies already know what works.

Now you do too.